Learning LLM From Scratch in 2025 (Free GitHub Roadmap)

Learning Large Language Models (LLMs) from scratch in 2025 is confusing.

Most resources are either too theoretical, locked behind paid courses, or scattered across dozens of blog posts and videos with no clear end-to-end path.

This guide solves that problem by walking you through a free, end-to-end GitHub roadmap to learn LLMs from scratch covering fundamentals, model architecture, training concepts, fine-tuning, and practical implementation.

What does “learning LLM from scratch” actually mean?

When people hear “learning LLM from scratch”, they often assume it means training a massive model like GPT-4 from raw internet data. That’s not what it means in practice.

Learning LLMs from scratch means understanding and building the core ideas step by step, instead of treating models as black boxes.

In simple terms, it involves:

- Understanding how transformers work at a conceptual and code level

- Implementing key components like tokenization, attention, and embeddings

- Training small-scale models locally or on limited compute to grasp how learning actually happens

- Learning when to build, fine-tune, or reuse existing open-source models

- Building real applications using LLMs instead of just running demos

You are not expected to:

- Train billion-parameter models

- Own expensive GPUs

- Recreate GPT’s

The goal is clarity, not scale.

If you can read model code, modify it, fine tune an open source LLM, and build practical projects on top of it that’s it , you have effectively learned LLMs from scratch.

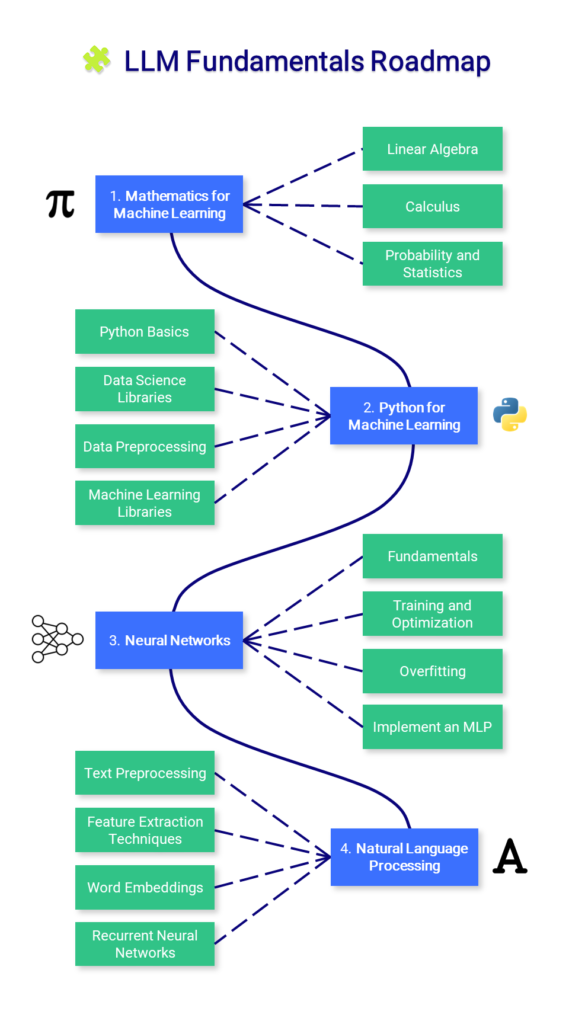

The LLM Fundamentals Roadmap

This roadmap covers the foundational knowledge required to understand Large Language Models — including mathematics, Python, neural networks, and basic NLP concepts.

You do not need to master everything here before moving forward. Think of this roadmap as the base layer that supports all practical LLM work.

How to Start Learning LLMs Using This Roadmap

You don’t need to complete everything in this roadmap to make progress. The goal is to move from understanding to building as quickly as possible.

Step 1: Pick your primary goal

Choose one path based on what you want to achieve:

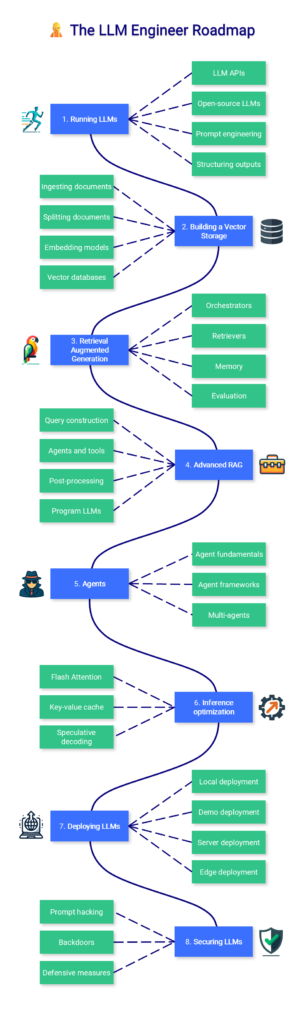

- Build real LLM powered applications :- Start with the LLM Engineer path

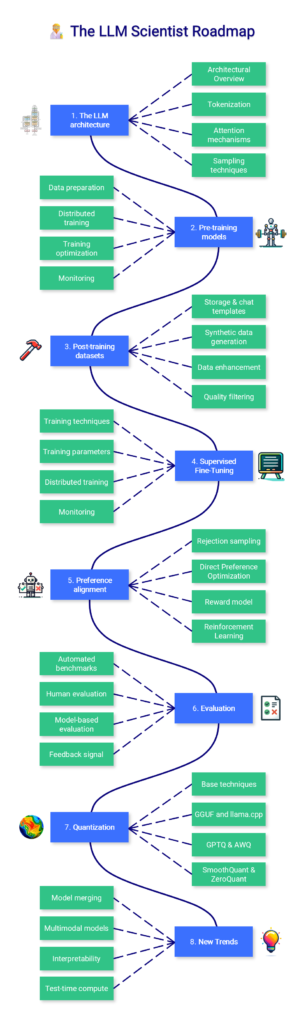

- Understand how LLMs work internally :- Start with the LLM Scientist path

You can always come back and explore the other path later.

Step 2: Start with small, practical experiments

Avoid passive learning. As soon as possible:

- Run a small open-source model locally or in Colab

- Read and modify example notebooks

- Break something and fix it

This is how concepts stick.

Step 3: Use the roadmap as a reference, not a checklist

You are not meant to complete every section linearly.

Use the roadmap to:

- fill knowledge gaps

- understand unfamiliar terms

- decide what to learn next when you’re stuck

That’s exactly how the course was designed.

The GitHub Repository Behind This Roadmap

This roadmap is part of a free, open-source LLM course created by Maxime Labonne.

The repository includes:

- structured learning paths

- hands-on notebooks

- Colab-ready examples

- practical tools for building and deploying LLMs

How to Use This GitHub Repository Effectively

This repository is not meant to be followed step by step from start to finish. Its real value comes from using it based on your current learning goal.

Start with intent, not everything

Begin by scanning the roadmap and identifying what you don’t understand yet. Jump directly to those sections instead of trying to complete the entire repo.

Learn by running the code

Don’t just read.

Run the notebooks locally or in Google Colab, change parameters, experiment, and fix things when they break. This is where LLM concepts actually start to make sense.

Build alongside learning

Use what you learn immediately:

- After tokenization → try writing a simple tokenizer

- After embeddings → build a small semantic search

- After fine-tuning → tweak a small open-source model

Small projects compound fast.

You can refer to the full course here:-

https://github.com/mlabonne/llm-course